In a post-Covid-19 reality, we find ourselves more confined to our homes than ever before. This tests the limitations of what a home can be, how it can function for multiple people and purposes, and is essentially quite challenging.

The goal is to allow the user to feel that they are in control of their space; freely morphing the room shape or changing between real-world space.

Yuri Suzuki and his team at Pentagram were invited by Space10 to design new ideas around technology in the home for their ‘Everyday Experiments’. The series looks at how technology can have positive effects in the home and how we can use speculative design principles to help us define what the future looks like. Each designer looked at different senses, technology and functions of the home, with Yuri focussing primarily on sound.

The positive effects of sound have long been a core tenet of Yuri’s design philosophy. Sound plays a huge role in wellbeing, technology and communication systems. Sound travels at a different speed than light and visual media—this is a unique property and allows us to ask specific questions when examining the state of technology in a domestic environment.

Yuri began his experiment by looking at how spatial audio could be used to manipulate the scale of domestic space and enhance the quality of our lived experience. In a post-Covid-19 reality, we find ourselves more confined to our homes than ever before. This tests the limitations of what a home can be, how it can function for multiple people and purposes, and is essentially quite challenging.

We must therefore reimagine how technology can be used to improve our health and wellbeing. Claustrophobia and a lack of diversity in surroundings in our daily lives is a problem that Yuri and team were keen to overcome through the use of sound and spatial audio.

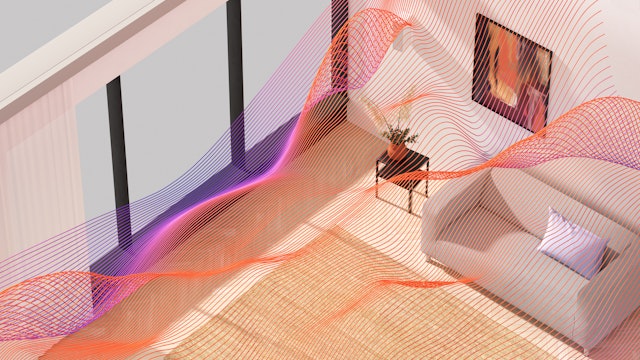

Convolution reverb is a technique that has been used in audio production for several decades, and is a process that creates an audio snapshot of a real space (an impulse response) that can then be used to recreate the space virtually. Yuri and team were interested in how they could take this existing technology and make it available for anyone, not just those who have a background in audio engineering. The key to this was to create a visualiser so that there is a direct link between eye and ear, and moreover how we can use spatial technology to allow a more immersive 3D experience.

The goal is to allow the user to feel that they are in control of their space; freely morphing the room shape or changing between real-world space. This would be achieved by first scanning the room visually via an iPad application, then connecting to IoT speakers that have both input and output capabilities, then the user can speak/sing/make environmental noise that are then outputted via IoT speakers. The user can then morph or change the shape via the app, hearing the change in room dimensions sonically.

The two real-world spaces included are St Paul’s Cathedral in London and the Colosseum in Rome. Both offer a very different sounding reverb—St Paul’s reverb is very unique, with a long decay time (the time it takes for a sound to die out) and use of its unique Whisper Gallery, allowing an echoing reverberation that travels around and disperses through the architecture.

The results of this experiment, which you can test for yourself with the audio prototype, allows you to immediately hear how space and architecture sound—providing a fascinating experience that is rarely explored.

Client

SPACE10Sector

- Technology

Office

- London

Partner

- Yuri Suzuki

Project team

- Maxwell Sterling

- Gabriel Vergara II

- Jake Richardson

- Marie Dommenget (3D Motion Designer)

Collaborators

- Counterpoint (audio prototyping)